An Introduction to Semantic Video Analysis & Content Search

Semantic video analysis & content search ( SVACS) uses machine learning and natural language processing (NLP) to make media clips easy to query, discover and retrieve. It can also extract and classify relevant information from within videos themselves.

Social media, smartphones, and advanced video recording tools have all contributed to an explosion in the use of video by people and businesses.

As the number of video files grows, so does the need to easily and accurately search and retrieve specific content found within them. With video content AI, users can query by topics, themes, people, objects, and other entities. This makes it efficient to retrieve full videos, or only relevant clips, as quickly as possible and analyze the information that is embedded in them.

A video sentiment analysis tool can benefit you if you are a:

-

Digital marketer

-

Social media manager

-

Social marketer

-

Brand manager

-

Video editor

-

Broadcaster

-

Educator

-

Podcaster, etc.

Semantic video analysis & content search uses computational linguistics to help break down video content. Simply put, it uses language denotations to categorize different aspects of video content and then uses those classifications to make it easier to search and find high-value footage.

This process is also referred to as a semantic approach to content-based video retrieval (CBVR).

What is semantic analysis?

Semantic analysis aims to identify and extract the intended meaning in human language as expressed in text or speech. It answers the simple question “what does this text data mean?”

Semantic technologies such as text analytics, sentiment analysis, and semantic search, empower computers to quickly process text and speech using natural language processing. They automate the process of accurately discovering the correct meaning of words and phrases in text-based computer files.

Semantic analysis can also be applied to video content analysis and retrieval.

What is semantic video analysis & content search?

Video is the digital reproduction and assembly of recorded images, sounds, and motion. It can be understood as an advanced form of language use. A video has multiple content components in a frame of motion such as audio, images, objects, people, etc. These are all things that have semantic or linguistic meaning or can be referred to by using words.

Semantic video analysis is a way of using automated semantic analysis to understand the meaning that lies in video content. This improves the depth, scope, and precision of possible content retrieval in the form of footage or video clips.

This type of video content AI uses natural language processing to focus on the content and internal features within a video. Companies can use SVACS to determine the presence of specific words, objects, themes, topics, sentiments, characters, or entities. Text analytics, using machine learning, can quickly and easily identify them, and allow anyone who is searching for specific information in the video to retrieve it quickly and accurately.

SVACS begins by reducing various components that appear in a video to a text transcript and then draws meaning from the results. This semantic analysis improves the search and retrieval of specific text data based on its automated indexing and annotation with metadata. Using natural language processing and machine learning techniques, like named entity recognition (NER), it can extract named entities like people, locations, and topics from the text.

The tagging makes it possible for users to find the specific content they want quickly and easily. For example, if a video news editor needs to find various clips of U.S. President Biden in a massive video library, SVACS can help them do it in seconds. If clothing brands like Zara or Walmart want to find every time their apparel is mentioned and reviewed, on YouTube or TikTok, a simple YouTube sentiment analysis or TikTok video analysis can do it with lightning speed.

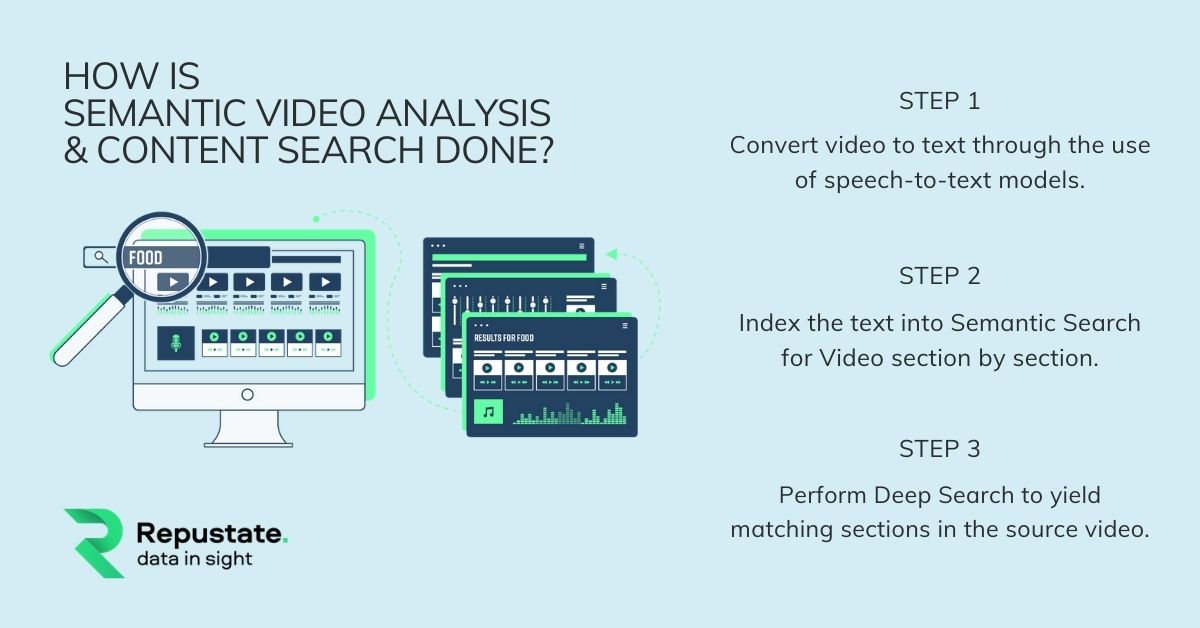

How is Semantic Video Analysis & Content Search done?

There are many automated video sentiment analysis tools out there. Repustate is one of the few companies that has built a Deep Search for semantic video analysis tool. It performs sentiment analysis in 3 steps:

Step 1: Converting the video to a textual format by using speech-to-text models. Since these models are unique for each language, they give a transcript of the speech along with the timestamps for each word.

Step 2: Indexing the text into a semantic search for the video section by section. In this way, the video is divided into smaller sections depending on pauses or different speakers. By analyzing it this way, we get enough context to disambiguate the entities.

Step 3: Performing Deep Search. Now that all the timestamps of the video associated with entities, themes, and topics, along with the associated metadata, are gathered, Deep Search will yield matching sections from the source video.

What are the ways you can use video content AI?

Over the last five years, many industries have increased their use of video due to user growth, affordability, and ease-of-use. Video is used in areas such as education, marketing, broadcasting, entertainment, and digital libraries.

Here are just a few examples of how semantic video analysis and search can be used:

- Social Media Listening:

Many companies that once only looked to discover consumer insights from text-based platforms like Facebook and Twitter, are now looking to video content as the next medium that can reveal consumer insights. Platforms such as TikTok, YouTube, and Instagram have pushed social media listening into the world of video. SVACS can help social media companies begin to better mine consumer insights from video-dominated platforms.

- Brand Insights:

Brands are always in need of customer feedback, whether intentional or social. A wealth of customer insights can be found in video reviews that are posted on social media. These reviews are of great importance as they are authentic and user-generated. Brands can use video sentiment analysis to extract high-value insights from video to strategically improve various areas such as products, marketing campaigns, and customer service.

- Podcasts:

Using semantic analysis & content search makes podcast files easily searchable by semantically indexing the content of your data. Users can search large audio catalogs for the exact content they want without any manual tagging. SVACS provides customer service teams, podcast producers, marketing departments, and heads of sales, the power to search audio files by specific topics, themes, and entities. These entities include celebrities, politicians, locations, and more. It automatically annotates your podcast data with semantic analysis information without any additional training requirements.

- User-Generated Content:

User-generated content plays a very big part in influencing consumer behavior. Consumers are always looking for authenticity in product reviews and that’s why user-generated videos get 10 times more views than brand content. Platforms like YouTube and TikTok provide customers with just the right forum to express their reviews, as well as access them.

As a marketer, it is not easy to keep a track of the thousands of hours of video content manually. TikTok video sentiment analysis lets you extract actionable insights from all that video content and gives you important insights into your brand’s performance

Repustate’s AI-driven semantic analysis engine reveals what people say about your brand or product in more than 20 languages and dialects. Our tool can extract sentiment and brand mentions not only from videos but also from popular podcasts and other audio channels. Our intuitive video content AI solution creates a thorough and complete analysis of relevant video content by even identifying brand logos that appear in them.

Furthermore, its visualization feature gives you results in an easy-to-use and intuitive dashboard.

The Repustate semantic video analysis solution is available as an API, and as an on-premise installation.

Home

Home

Mar 11, 2021

Mar 11, 2021

Jeremy Wemple

Jeremy Wemple

Dr. Ayman Abdelazem

Dr. Ayman Abdelazem

Dr. Salah Alnajem, PhD

Dr. Salah Alnajem, PhD

David Allen

David Allen

Repustate Team

Repustate Team